Now you know that your team can deliver 3. You can easily calculate the average throughput rate of your team: (3 2 3 4 5)/5 3.4 tasks. Let's say that your team completes 3 tasks on Monday 2 - on Tuesday, 3 - on Wednesday, 4 - on Thursday, 5 - on Friday. 8 examined the effective throughput optimization in random spatial networks with Poisson interference process under the constraints of. Real-time systems are about latency guarantees, and how long a task can monopolize a core without being switched away from is an important scheduler consideration. To get that data, you need to calculate the delivered work items in your workflow daily.

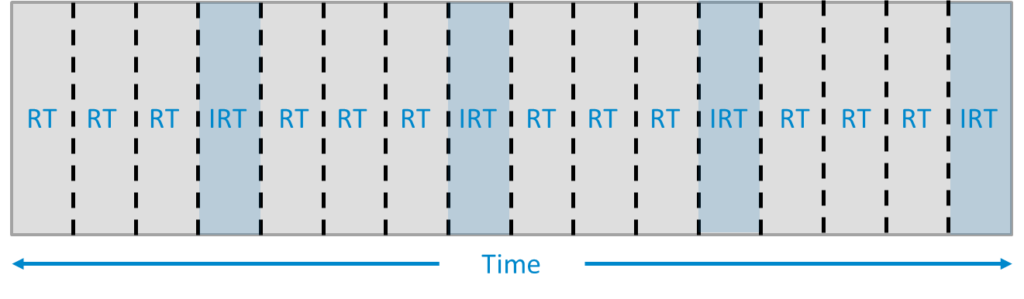

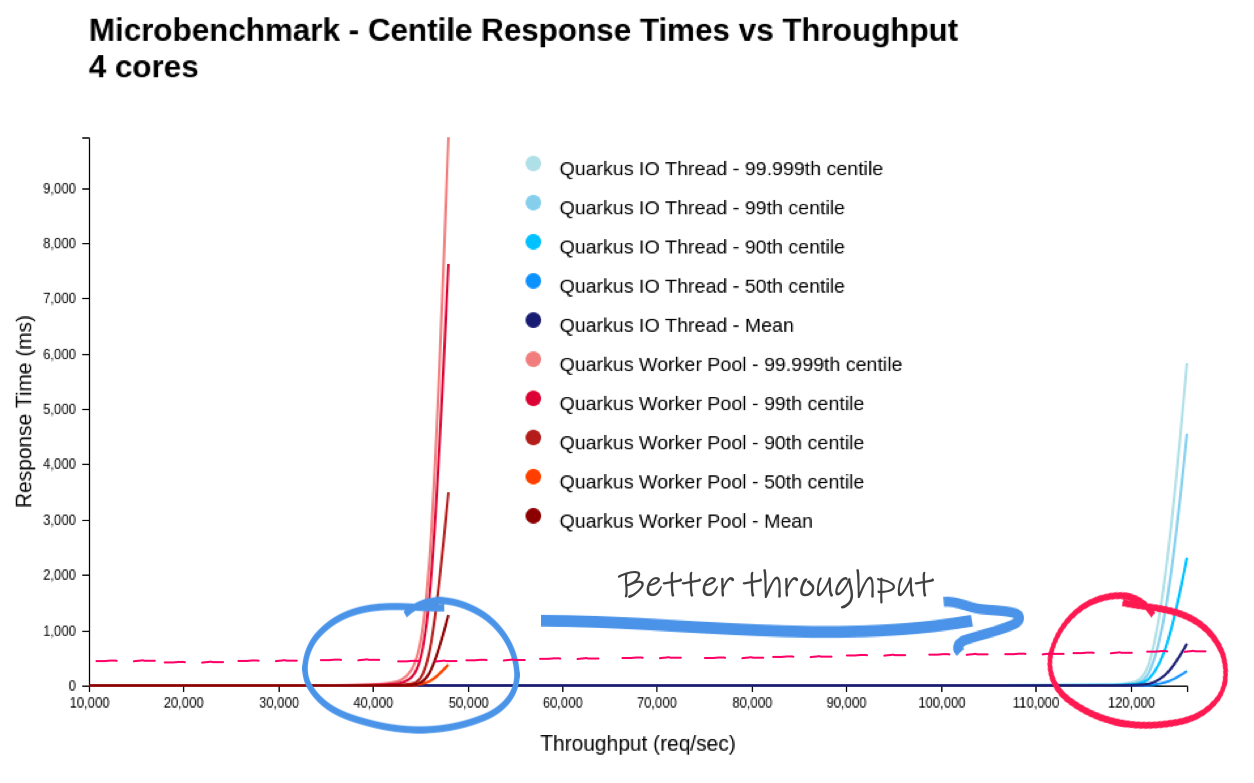

The important factor here is what happens if you run more threads or processes (tasks) than you have physical cores: with a 1000ms round-robin timeslice, once all your cores were occupied with those tasks, nothing else in user-space would get a timeslice for a whole second, not even your X server or terminal emulator. That's a surprisingly high number of context-switches, I think.Īnyway, realtime scheduling isn't about optimizing for overall throughput, which is what you're measuring. I think it doesn't count as a context-switch when the kernel chooses to return back to the same user-space task that was interrupted (by a timer interrupt or whatever at the end of its timeslice). If time slice is quite short, scheduler will take more processing time.

The period of each time slice can be very significant and crucial to balance CPUs performance and responsiveness. The scheduler runs each process every single time-slice.

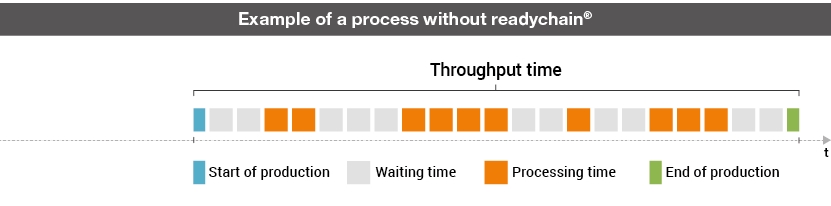

I wonder what is the result of modification of that kernel parameter then? I expect that larger RR time slice results in fewer context switches, at least. Time slice : It is timeframe for which process is allotted to run in preemptive multitasking CPU. With respect to the large change in RR time slice, the run time, context switches and other parameters are the same. They cannot fully explore the performance of parallel applications, because they ignore the time-slice requirements of different phases of parallel. $ sudo sysctl kernel.sched_rr_timeslice_ms=1000ġ20,094,568,266 instructions # 3.98 insn per cycle Performance counter stats for 'system wide':ġ20,100,665,611 instructions # 3.98 insn per cycle $ perf stat -a -e instructions,cycles,context-switches,cpu-migrations - sudo. Throughput time processing time inspection time move time queue time. The experimentally measured results show exceptional performance of up to 8.68Gbps throughput per. The perf result shows: $ sudo sysctl kernel.sched_rr_timeslice_ms=1 It focuses on the FPGA-based Layer 2 TSON metro node system. #THROUGHPUT WITH TIMESLICE CODE#I have tested the code with two kernel.sched_rr_timeslice_ms values. Int policy = sched_getscheduler(pid_num) Ĭase SCHED_OTHER: printf("SCHED_OTHER\n") break Ĭase SCHED_RR: printf("SCHED_RR\n") break Ĭase SCHED_FIFO: printf("SCHED_FIFO\n") break įor (unsigned long long i = 0 i < 30000000000 i ) Int ret = sched_setscheduler(pid_num, SCHED_RR,

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed